Retail Cybersecurity in 2026: Rethinking Security for the Modern Retail Enterprise

Why traditional security models are failing modern retail — and the architectural shift CISOs need to protect loyalty data, cloud infrastructure, and guest trust.

In the fast-paced world of business, data has emerged as a cornerstone for informed decision-making and strategic planning. To harness the full potential of data, organizations are increasingly turning towards the concept of Data Observability. This transformative practice empowers companies to not only comprehend the status of their data and data systems but also reap a multitude of business benefits. In this article, we’ll delve into the world of Data Observability and explore the numerous advantages it offers to modern businesses.

Data Observability can be likened to a powerful telescope for a company’s data landscape, offering a panoramic view of data pipelines, structures, and flows. It’s the quintessential capability for organizations to have a real-time grasp of the data’s health, quality, and movement within their ecosystem. With strong data observability practices in place, businesses are equipped to develop robust tools and processes, which allow them to detect data bottlenecks, prevent downtimes, and ensure consistency in their data-driven operations.

Before delving into the benefits of Data Observability, let’s first understand the challenges that businesses typically face:

Locating Appropriate Data Sets: The sheer volume of data available can make it challenging to identify and access the right datasets for analysis and decision-making.

Ensuring Data Reliability: Trustworthy insights depend on data accuracy. Ensuring that the data is reliable and up-to-date is crucial for making informed business choices.

Managing Data Dynamics: The ever-changing nature of data – in terms of volume, structure, and content – poses a considerable challenge for maintaining consistent data quality.

Impact of Changing Data: When the data feeding into models and algorithms changes, outcomes and predictions can also shift. Managing this variability is crucial for maintaining reliable results.

Visibility Gap: Executing complex processes like models, jobs, and SQL queries without proper visibility can lead to inefficiencies and operational blind spots.

Operational Performance: High-performance data operations are essential for productivity. Challenges in this area can hinder efficient business processes.

Cost and Budget Concerns: Unforeseen cost overruns, poor spend forecasting, and budget tracking can disrupt financial planning.

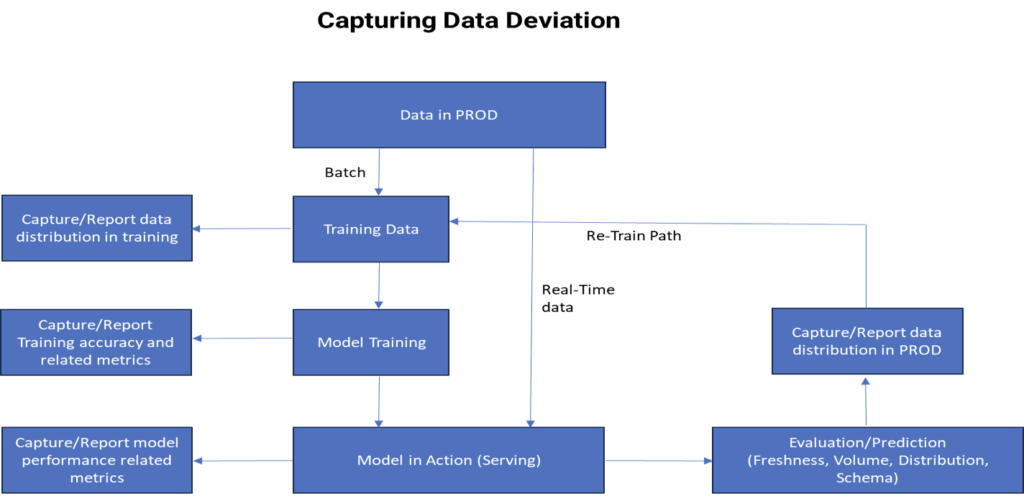

Data observability can be implemented as a “rule” inside our data pipeline.

For instance, if the “freshness check” rule is triggered, then the “terminator” will wake up and halt the execution of the data pipeline, thus protecting the data and enhancing the quality and trust, which is essential for the concept of a single source of truth (SSOT).

To tackle these challenges head-on, businesses are integrating a range of tools and technologies under the umbrella of Data Observability. Some key components of this implementation include:

Insights through ML (Amazon sage maker/Azure ml/gcp vertex ai): This platform facilitates the training and deployment of machine learning models, ensuring that data-driven predictions and decisions are rooted in accurate insights.

ETL Automation (AWS Glue/ azure data factory/ GCP data flow): Designed for ETL (Extract, Transform, Load) processes, AWS Glue automates data preparation and movement, ensuring data quality and consistency.

Data Storage(AWS redshift/Azure synapse/GCP big query): A combination of storage solutions like Amazon S3, PostgreSQL, and Redshift offer scalable and secure data storage capabilities.

Output Formats (csv,json,avro,parquet, orc): Data can be stored in formats like CSV or Parquet, etc., optimizing storage efficiency and data processing speed.

Data Analysis Tools (aws quicksight/Microsoft power bi/google data studio): Amazon Athena and Amazon QuickSight are powerful tools for querying and visualizing data, enabling efficient analysis and data-driven decision-making.

Realizing Outcomes: Use Cases from Pharma Industry

The implementation of Data Observability yields several valuable outcomes and use cases:

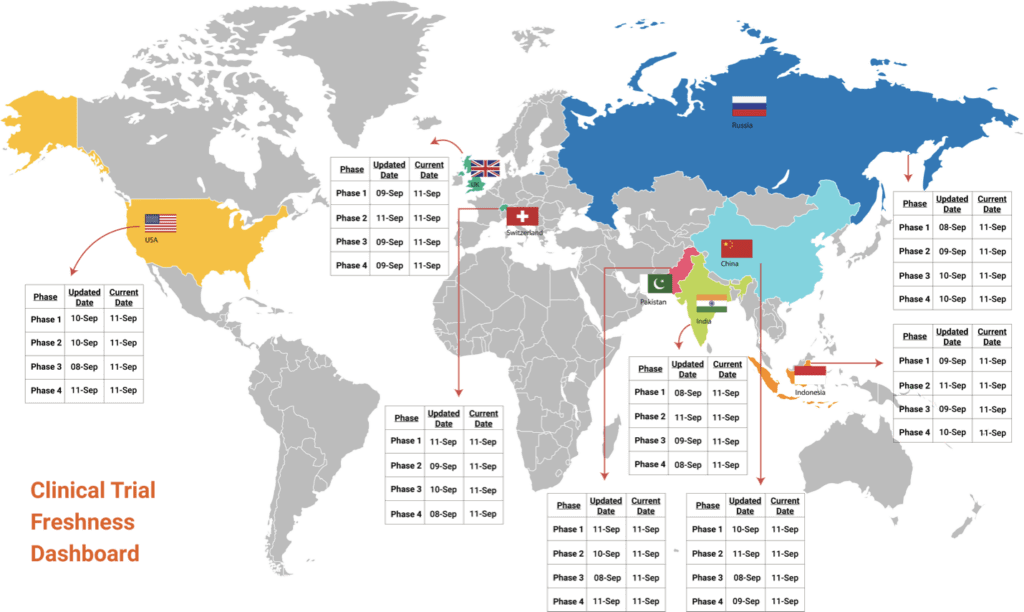

1. Freshness: Organizations can ensure that the data they are working with is up-to-date, reducing the risk of outdated insights driving decisions.

E.g.: Clinical trial data needs to be latest in all systems to comply with regulatory bodies reporting requirements and principal investigators will make medical decisions based on that data

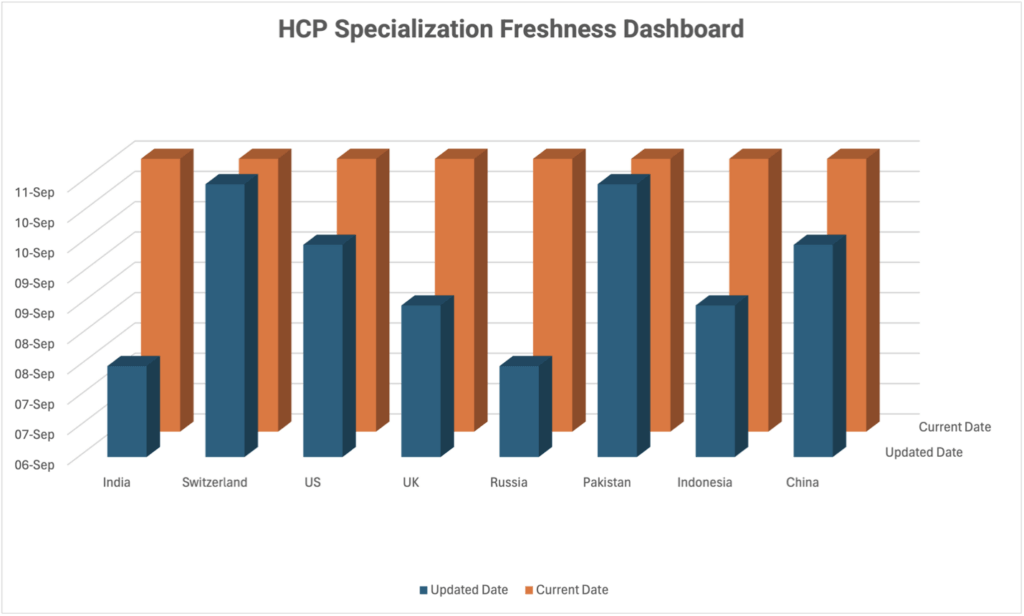

Specialization and registration of every HCP needs to be up to date to make sure product sale is not impacted and to comply with non-marketing regulation off-label use.

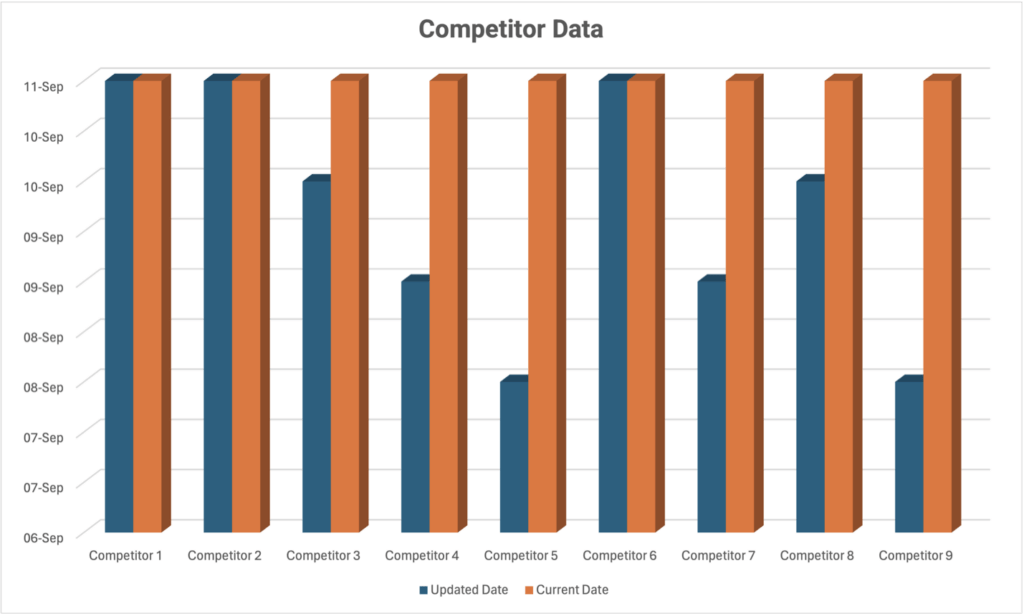

Competitor data should be latest to make better marketing decisions

2. Distribution: Data movement across various stages can be monitored, ensuring a smooth flow of information without bottlenecks.

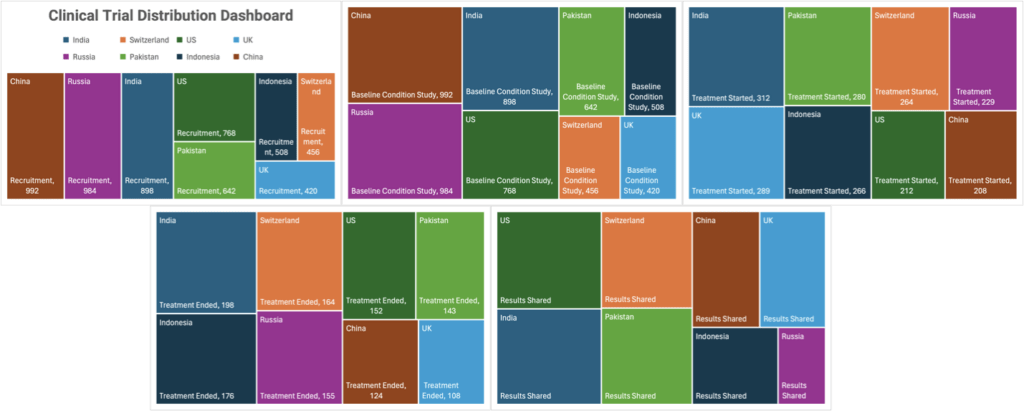

E.g.: Clinical trial moves from one stage to another starting from recruitment to baseline condition study to treatment (Treatment provided and results)

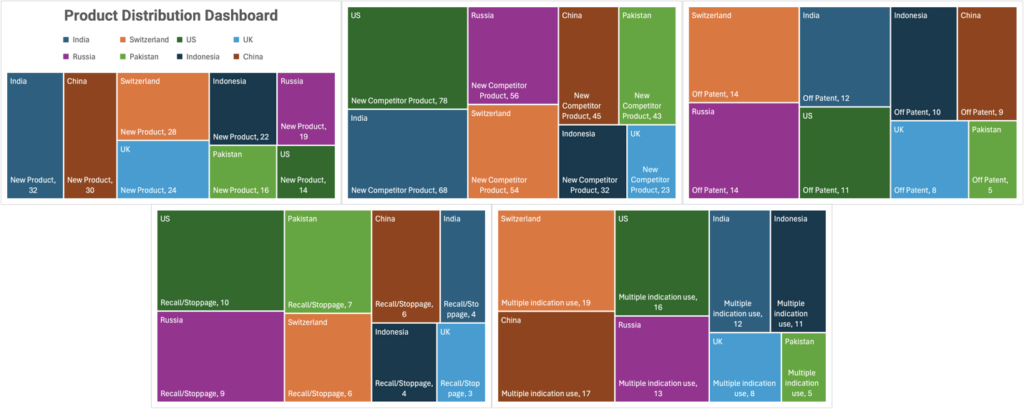

New products/competitors coming into the market, products going off patent, recall/stoppage will have an impact on data distribution and will be captured/reported to business team accordingly.

When a product is approved to be used for multiple indications it is captured and reported.

3. Volume: Monitoring data volume helps in scaling resources effectively, accommodating fluctuations in data influx.

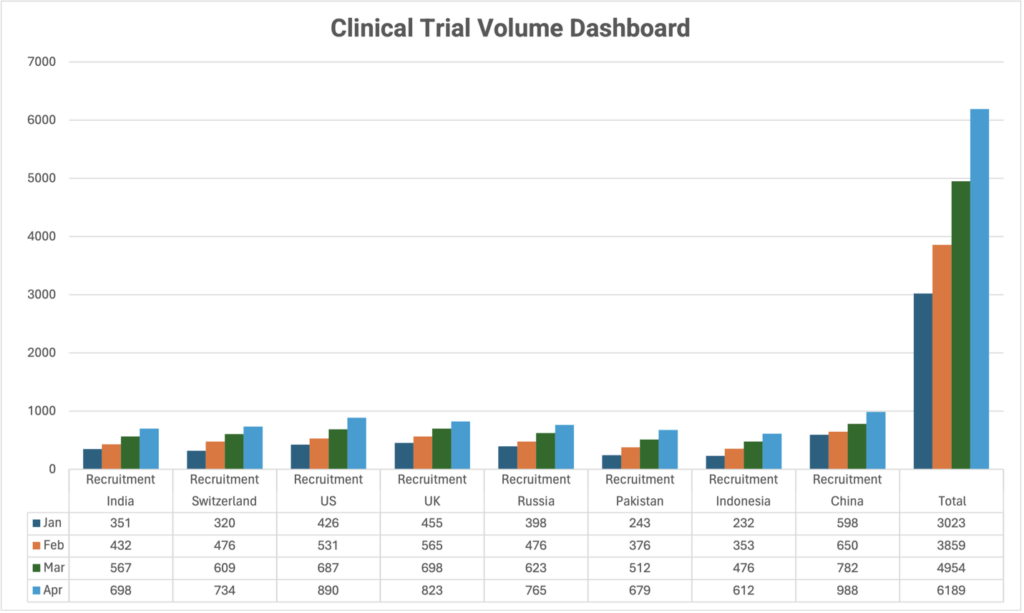

E.g.: High volume of clinical trial data gets captured when there is higher patient recruitment due to sudden rise in adverse events or when a new site gets activated, and patients start getting recruited in it.

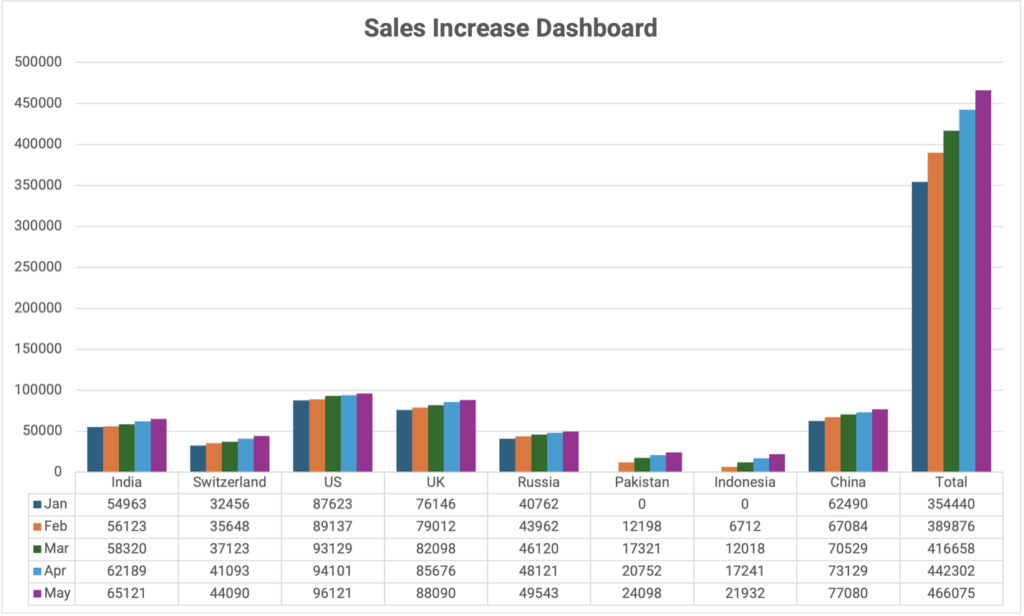

Sales data volume increase gets captured when the reach gets expanded post approval from concerned authority (a new state or a country)

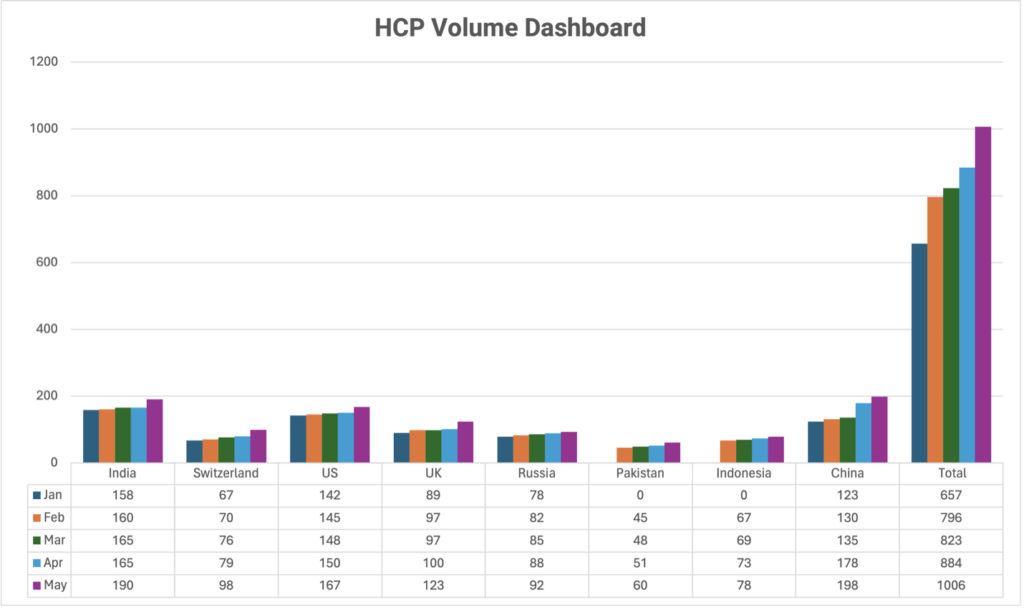

Increase in HCP data gets captured once our products get approval to enter new geography or the drug is approved for multiple indication.

4. Schema: Ensuring consistency in data structures prevents conflicts and errors when processing information.

E.g.: When the Structure of vendor data is incorrect it is captured and reported before it is sent to the SDTM system for data study/analysis.

When a combination of old and new product codes for the same product gets sent, it gets captured and a Mapping of all these source product codes to one master product is enabled.

5. Lineage: Tracking the lineage of data allows businesses to understand where data comes from and how it’s transformed, aiding in data quality assurance.

E.g.: The traversal of Clinical trial – patient data from EDC (electronic data capture) to SDTM (study data tabulation model) to study report can be captured and reported.

The traversal of HCP data (First & Last Name, Specialization, Phone Number, etc.) received from multiple sources (Raw to Clean to Curated layer via MDM Match & Merge rule checks and arriving at a golden record) can be captured and reported.

Outcome (Infographic)

Lower incidence of regulatory observation by 15% – clinical trial

Improved compliance to regulation – 5% – clinical trial

Better decision ability and improved performance – 10% – commercial

Harnessing the Business Benefits:

Implementing robust Data Observability practices offers a range of business benefits that foster growth and efficiency:

Improves Data Accuracy: By having real-time insights into data health, organizations can identify and rectify inaccuracies before they affect decisions.

Timely Data Delivery: With observability, data downtimes are minimized, ensuring that insights are available when needed, promoting timely and informed decisions.

Early Issue Detection: Observability allows businesses to spot potential data concerns in their infancy, preventing them from escalating into more significant problems.

Prevents Data Downtime: By identifying bottlenecks and performance issues in data pipelines, observability minimizes disruptions, leading to uninterrupted operations.

Cost Optimization: Through constant monitoring, businesses can optimize resources effectively, preventing unnecessary costs and improving budget forecasting.

Conclusion: Illuminating the Path Forward

Data observability not only provides health insights (in the form of predictive analytics and advanced analytics) but helps in collecting metadata for periodically updating the data catalogs. Capturing end-to-end data lineage is the key to understanding your single source of truth.

In conclusion, Data Observability emerges as a critical enabler for businesses seeking to thrive in a data-centric landscape. By addressing data challenges at their core, it empowers organizations to make accurate decisions, foster efficiency, and propel growth in an increasingly competitive environment. It’s not just about observing data; it’s about observing success.

Why traditional security models are failing modern retail — and the architectural shift CISOs need to protect loyalty data, cloud infrastructure, and guest trust.

Discover how Salesforce Education Cloud, Data Cloud, and Agentforce enable AI-driven student engagement and intelligent campuses with Altimetrik.

Learn how a fragmented call center evolved into an intelligent service platform, boosting operational efficiency, enhancing customer experience, and delivering actionable insights at scale.

Altimetrik is committed to protecting your personal information. To apply for a position, you will need to provide your email address and create a login. Your information will be used in accordance with applicable data privacy laws, our Privacy Policy, and our Privacy Notice.